Anthropic introduced Agent Skills a while ago, and I forgot to dive into that field of AI, until today. The concept is open source and not exclusive to Anthropic of Claude, you can see the details in What you will see below done in Claude Desktop.

But first, I want to thank my dear friend Kay for inspiring today’s post. I highly recommend you subscribe to his AI For You newsletter on LinkedIn. Kay ‘does this for real’, so when you hear her talk about things like agent memories, it comes from real world experience!

Wait, are skills just ‘fancy commands’?’

Sure, it looks like a prompt, or even a description of the MCP Tool. But in practice, they allow Agents to perform tasks consistently across sessions or commands or even Agents, and use fewer tokens!

SQLcl is an MCP Server; SQLcl has a LOAD command

What if I could enter the HELP text for the LOAD command into Claude’s Skill Creator tool, and have it become a skill for me?

I did just that, I gave it a name, and a general description. I asked him to look at the HELP text for the LOAD command, and use MCP’s SQLcl tool, ‘run-sqlcl’ to run the command, to get the information needed to understand the feature.

Claude then created a mock CSV file to experiment with his skills and tools, and tested it himself, until it was deemed ‘ready’.

I then ran it through several real scenarios, and had Claude update his skills each time, learning from each iteration. I’ll share my temporary Skills ‘resource’ below if you’re interested. More importantly, I’ll be working on publishing a ton of Skills in our public Github repo so you can leverage them to get the most out of our SQLcl commands.

Some example scenarios

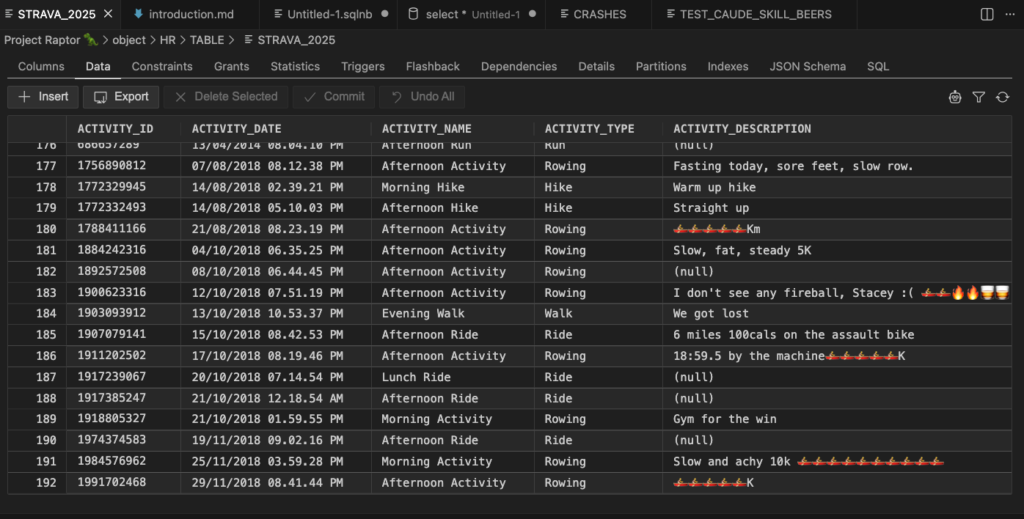

Agents can use skills, as needed, as they do on MCP Servers and tools. I had it load my strava data into a table, and gave it a file to play. Just by using the Skill, he did the rest himself.

In short, he now knows to:

- check the file

- find date, define date format

- find out whether SQLcl can do the column measurements itself or whether it needs to create a ddl table – depending on the number of rows in the file

- configure various LOAD settings

- results in executing the LOAD command

- analyze the results, correct them if necessary

- compare file sizes and table rows

And here is an example call of the run-sqlcl tool used to do all the work –

"model": "claude-sonnet-4-6",

"sqlcl": "SET LOAD SCAN_ROWS 5000

SET LOAD CLEAN_NAMES TRANSFORM

SET LOAD ERRORS UNLIMITED

SET LOAD DATE_FORMAT MON DD, YYYY, HH:MI:SS AM

LOAD STRAVA_2025 /Users/thatjeffsmith/activities.csv NEW"

This is my Strava importhandled in just 2 SQLcl MCP tool calls, one to perform the load, and another to check the number of rows.

And my desk –

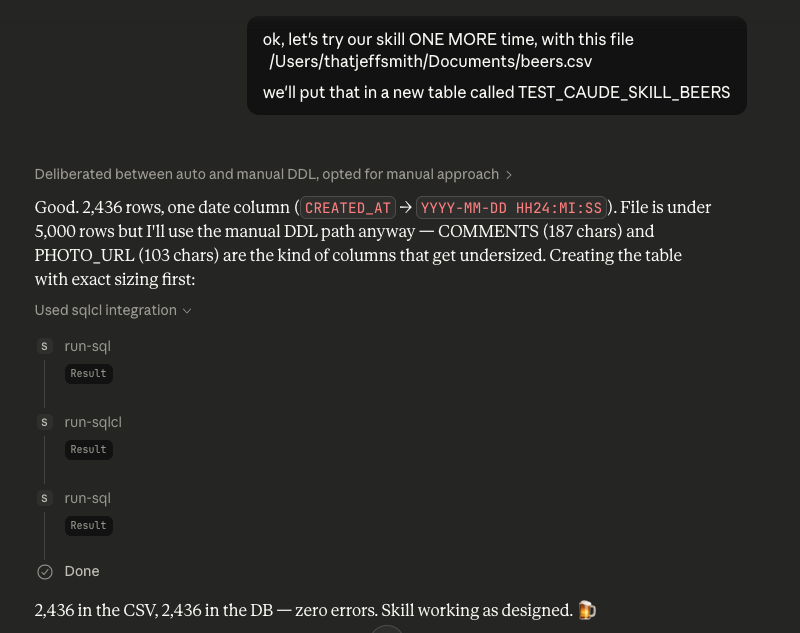

Here’s my Untappd importhandled in just 3 SQLcl MCP tool calls, one to create the table, one to perform the load, and one to check the number of rows.

This breaks it down in additional steps because there is more data than SQLcl can scan, and there are variations in the data that require columns to be defined explicitly vs letting SQLcl organize them based on the first X rows it reads.

And my desk –

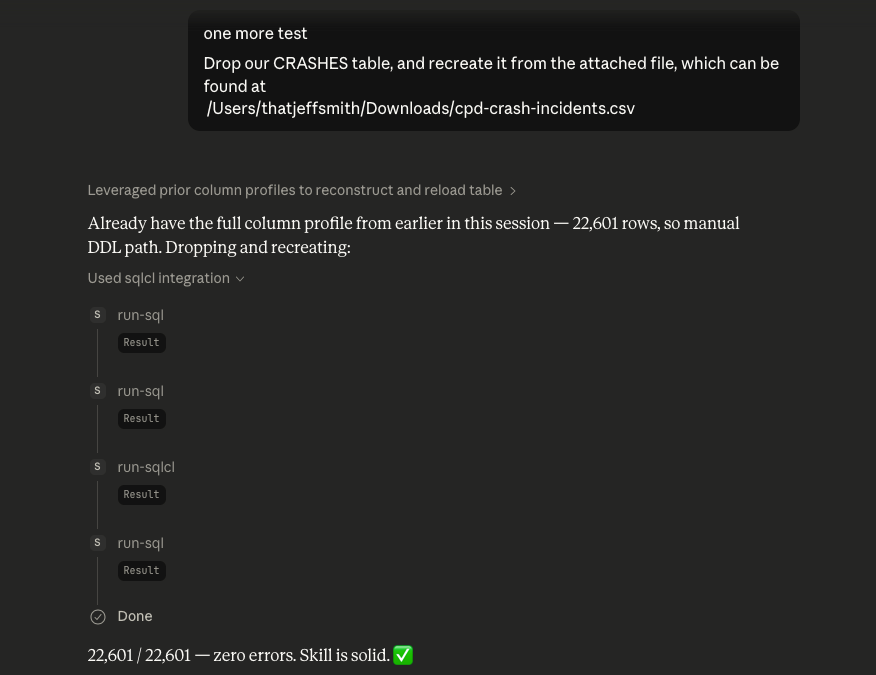

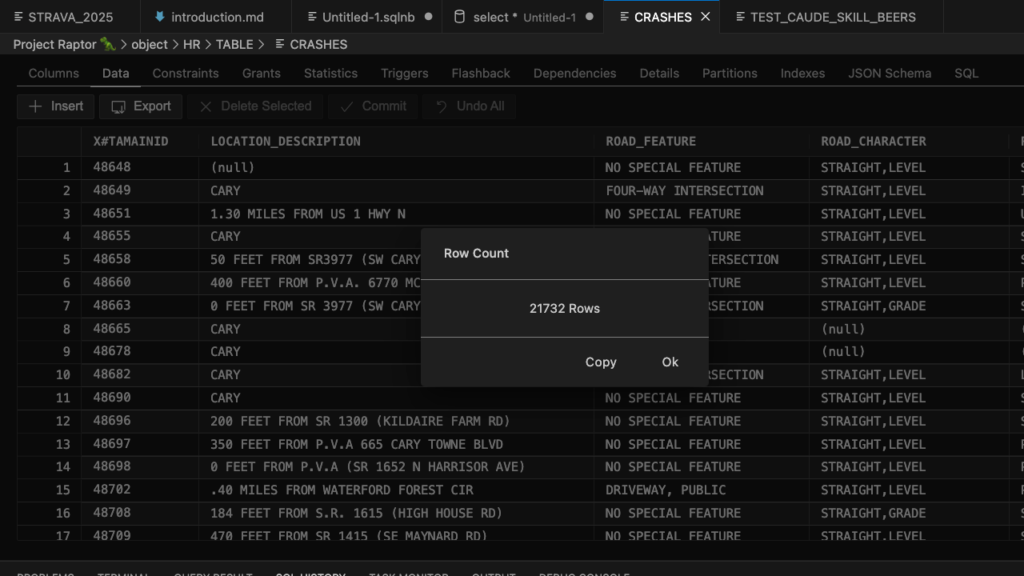

And one more, here is my City of Cary Public Data Set for traffic congestion importhandled in just 4 MCP SQLcl tool calls, one to delete the existing table, one to create the table, one to perform a load, and one to check the number of rows.

And my desk –

My expertise

---

Name: oracle-csv-import

information: >

Use this skill whenever a user wants to import a CSV (or TSV, pipe-delimited,

or Excel) into Oracle Database using MCP's SQLcl server. Trigger on anything

requests to load, import, or insert files into Oracle tables — even expressed

as usual "push this CSV to Oracle", "create a table from this data file",

"put this into DB"or "load exports this spreadsheet to Oracle". Also use

when the user asks Claude to analyze the CSV before importing it, find out

Oracle column types, specify the correct date format for Oracle LOAD, or

preview what tables SQLcl will generate. Always enable this skill when data

files and Oracle/SQLcl are mentioned together in any import-oriented context.

---

# Import Oracle CSV via SQLcl MCP

Your task is **prepare the correct SQLcl configuration** and then run it

import using SQLcl MCP tool (`run-sqlcl` / `run-sql`). SQLcl `LOAD` command

handles DDL creation, table creation, and row insertion — your role is to detect

things that could go wrong (especially date formats, delimiters, and

column size) and set the appropriate options before pulling the trigger.

**Critical limitations:** SQLcl column auto-sizing is based on the first scan

N row. Even if you set it `SCAN_ROWS` for large numbers, SQLcl is internally limiting

scan at 5,000 lines. For files where the width value only appears on the next line, automatic resizing is performed

will produce columns that are too narrow and cause O...

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.

Comments are closed, but trackbacks and pingbacks are open.